Exploring the Role of Computer Vision in Self-Driving Vehicles

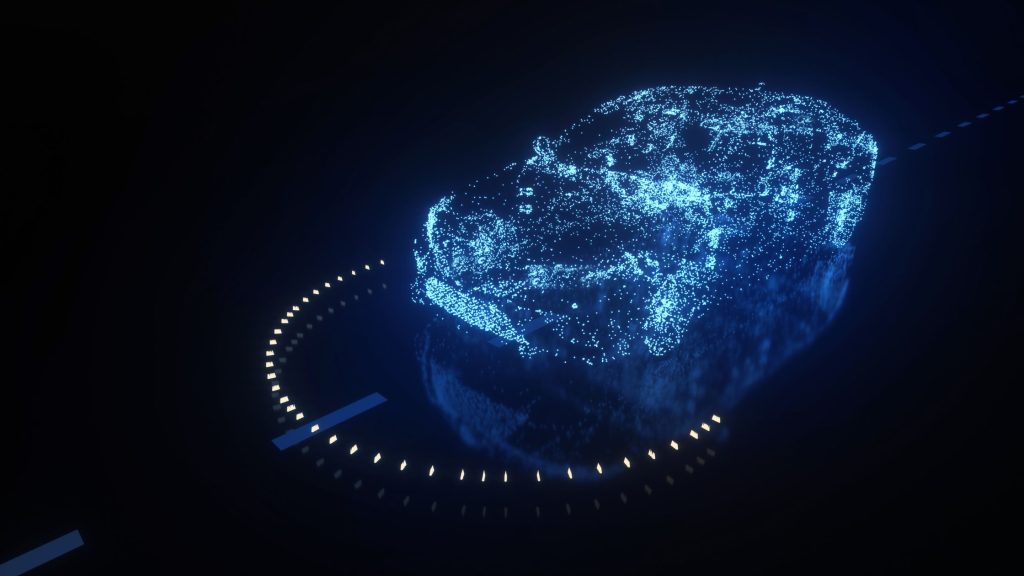

The development of computer vision technology is a groundbreaking aspect of the automotive industry, reshaping how we think about transportation. Autonomous vehicles rely heavily on this technology to interact seamlessly with their surroundings, making it a critical component in the quest for safer and more efficient driving solutions.

At its core, computer vision enables cars to interpret and understand visual information, thus facilitating a range of functionalities essential for autonomous navigation. Key processes involved include:

- Data processing: Autonomous vehicles are equipped with cameras and sensors that gather immense amounts of visual data. This information is processed in real-time, allowing the vehicle to react promptly to changes in its environment, such as a child darting into the road or sudden traffic changes.

- Object detection: A pivotal capability for self-driving technology, object detection involves identifying and classifying various elements within the vehicle’s field of vision, including pedestrians, other vehicles, cyclists, and critical traffic signs like stop signals or yield indicators.

- Environmental mapping: By creating detailed 3D maps of their surroundings, autonomous vehicles can navigate complex environments better. These maps help the vehicle understand spatial relationships and obstacles, guiding it through unfamiliar routes.

While the potential of computer vision is vast, several challenges must be confronted to realize fully autonomous driving:

- Weather variability: Factors such as rain, snow, and fog can obscure visual data, presenting significant hurdles for reliable operation. For instance, a heavy downpour may hinder the effectiveness of cameras, leading to reduced detection accuracy.

- Complex urban environments: Cities are filled with unpredictable elements, from jaywalking pedestrians to erratic drivers. Navigating through such scenarios requires advanced algorithms capable of real-time decision-making, prioritizing safety over speed.

- Safety and reliability: Ensuring that autonomous vehicles can operate fail-safely under all conditions is paramount. Regulatory bodies, such as the National Highway Traffic Safety Administration (NHTSA) in the U.S., are closely examining these technologies to establish standards and guidelines.

Amid these challenges, innovations in computer vision are paving the way for progress. Techniques like deep learning and sensor fusion enhance the capability of autonomous systems, enabling them to learn from vast amounts of data collected from real-world driving experiences. With these advancements, the accuracy of detecting and responding to various scenarios continues to improve.

This evolving technology signifies more than just a shift in transportation; it prompts critical discussions regarding regulation, ethics, and the impact on urban infrastructure. As cities begin adapting to accommodate these vehicles, questions arise about liability in the event of accidents and the equitable distribution of technology.

In this exploration of computer vision for autonomous vehicles, we will delve deeper into the incredible innovations and persistent challenges that could define the future of transportation. Buckle up, as we journey through this fascinating intersection of technology, ethics, and human experience.

DISCOVER MORE: Click here to learn about cutting-edge crop management

Challenges Facing Computer Vision in Autonomous Vehicles

The intricate world of computer vision in autonomous vehicles is fraught with challenges that manufacturers must navigate before self-driving cars can become a common sight on our roads. Despite significant advancements in machine learning and sensor technology, real-world driving conditions present unique obstacles that must be addressed to ensure safety and functionality.

One of the foremost challenges lies in weather variability. Inclement conditions, such as rain, fog, and snow, can significantly reduce the effectiveness of visual data capture. For example, during heavy snowfall, visibility can drop dramatically, making it difficult for sensors to detect road signs or pedestrians. Moreover, the accumulation of ice on camera lenses can lead to distorted images, further affecting the vehicle’s ability to interpret its surroundings. As a result, engineers are more incentivized now than ever to develop robust systems that can either augment or compensate for these environmental factors.

Complex urban environments pose another significant hurdle. Unlike rural settings, cities are teeming with unpredictable elements. Autonomous vehicles must contend with erratic driver behavior, pedestrians who may step into the street without warning, and countless other variables in real-time. To navigate such intricate scenarios, the algorithms used for object detection must be exceptionally advanced. They need to draw upon a wealth of training data and implement machine learning techniques that allow for both object classification and contextual understanding. This real-time decision-making not only prioritizes rapid responses but also emphasizes safety, which is paramount in urban driving conditions.

Furthermore, the concern for safety and reliability cannot be overstated. Regulatory bodies, including the National Highway Traffic Safety Administration (NHTSA), are intensively scrutinizing how these vehicles operate under various conditions. Establishing stringent safety standards and guidelines is necessary to build public trust in autonomous systems. For instance, a vehicle’s ability to handle a variety of situations without human intervention is critical, particularly in high-stakes scenarios like emergency braking or collision avoidance. According to a report from the Society of Automotive Engineers (SAE), achieving Level 4 or Level 5 automation—indicating full autonomy—means that vehicles must reliably handle unforeseen circumstances without driver input.

- Latency in Processing: The speed at which a vehicle can process data and make decisions is crucial. Delays can lead to accidents, making it essential for computer vision systems to operate at lightning speed.

- Data Privacy Concerns: As autonomous vehicles collect detailed information about their surroundings, issues regarding data privacy and security emerge, necessitating responsible data handling practices.

- Integration with Existing Infrastructure: Autonomous vehicles need to communicate effectively with traffic signals, road signage, and other components of urban infrastructure, raising the need for upgrades and modernization.

Despite these challenges, the field of computer vision is continuously evolving, opening doors to new innovations that enhance the performance and safety of autonomous vehicles. Emerging techniques like deep learning and sensor fusion are being explored, as the ability to combine data from multiple sources can help create a more accurate understanding of the vehicle’s environment. As we delve deeper into the innovations shaping the future of transportation, the promise of self-driving technology continues to ignite curiosity and hope for a new era in mobility.

The Role of Computer Vision in Autonomous Vehicles: Challenges and Innovations

In the rapidly evolving landscape of autonomous vehicles, computer vision plays a pivotal role in enabling these vehicles to achieve a higher level of automation and accuracy. This innovative technology allows vehicles to interpret and understand their surroundings, making real-time decisions crucial for safe navigation. However, the journey towards fully autonomous driving is fraught with challenges and hurdles that the industry must address.

One of the primary challenges lies in the vehicle’s ability to process visual information under varying environmental conditions. Factors such as poor lighting, adverse weather, and complex urban environments can drastically affect the performance of computer vision algorithms. For instance, heavy rain or snow can obscure camera lenses, leading to misinterpretations of the data collected. Innovations are underway to enhance the robustness of these systems, integrating AI, machine learning, and multi-sensor methodologies that can combine inputs from cameras, LiDAR, and radar to create a comprehensive understanding of the environment.

Another significant challenge is the need for real-time processing. Autonomous vehicles must evaluate the data they collect almost instantaneously to react appropriately to the dynamic environments in which they operate. To tackle this, advancements in hardware and software technologies are critical. For example, the deployment of powerful GPUs and dedicated neural network processors has proven effective in accelerating image processing tasks and ensuring that decision-making is both swift and reliable.

The continuous development of deep learning models for computer vision is driving progressive improvements in object detection, lane recognition, and pedestrian tracking. These advances not only enhance vehicle safety but also contribute to smoother rides and better user experiences overall. The ongoing research and enhancements in computer vision technologies are essential for the further adoption and trust in autonomous vehicles, propelling the industry towards a future where fully autonomous driving becomes a reality.

| Category | Brief Description |

|---|---|

| Safety Enhancements | Computer vision improves vehicle safety by enhancing detection of obstacles, vehicles, and pedestrians. |

| Efficient Navigation | Real-time processing capability offers precise navigation through complex environments, optimizing routes and reducing congestion. |

As the field of autonomous driving continues to advance, the integration of computer vision will remain a fundamental element in facing prevalent challenges and realizing innovative solutions. It is this intersection of technology and ingenuity that paves the way for a safer, more efficient, and interconnected future in transportation.

DIVE DEEPER: Click here to discover more

Innovations Driving Computer Vision in Autonomous Vehicles

As the challenges facing computer vision in autonomous vehicles are addressed, a wave of innovations is shaping the trajectory of this technology and enhancing its robustness. Central to these advancements is the integration of deep learning, a subset of machine learning that leverages large datasets to train neural networks capable of making predictions with remarkable accuracy. This has propelled autonomous driving systems to new heights, capable of identifying not just static objects but dynamically analyzing moving obstacles in real-time.

An intriguing example of deep learning in action is convolutional neural networks (CNNs), which excel in visual recognition tasks. By analyzing pixel data from images, CNNs can distinguish between various objects such as cars, bicycles, and pedestrians with astonishing precision. In fact, companies like Waymo and Tesla have utilized vast amounts of annotated data to train their systems, enabling them to handle complex scenarios such as recognizing a child suddenly darting out onto the road.

Another pivotal innovation is sensor fusion, which combines the data from multiple sensors—from cameras to lidar and radar. This multidisciplinary approach allows for a deeper understanding of the environment, creating a comprehensive model that takes advantage of the strengths and compensates for the weaknesses of each sensor type. For example, while cameras provide high-resolution imagery, lidar offers precise distance measurements. When these technologies work in tandem, they create a more detailed picture, allowing the vehicle to detect objects even in challenging conditions like poor lighting or inclement weather.

Moreover, advancements in image processing algorithms are enhancing how vehicles interpret visual information. Techniques such as real-time object segmentation allow vehicles to isolate specific objects within their field of view, significantly aiding in the decision-making process. This ability can be crucial when navigating crowded urban environments, where distinguishing between a parked vehicle and an active one can mean the difference between a safe drive and a collision.

Testing and simulation have also evolved dramatically. Advanced simulation software enables developers to create intricate virtual environments for autonomous vehicles to navigate. By simulating a variety of driving conditions—from bright, sunny days to heavy rain—engineers can expose their systems to potential challenges without the risk associated with real-world testing. This virtual approach not only accelerates the development cycle but assures regulatory bodies that safety standards are being met.

Additionally, the collaborative nature of the tech industry is driving rapid progress in computer vision technologies. Partnerships among tech companies, automotive manufacturers, and academic institutions foster the sharing of knowledge and expertise. One notable initiative is the formation of industry standards aimed at ensuring seamless communication between vehicles and infrastructure. By working together, stakeholders are paving the way for a more conducive environment for autonomous vehicles.

- Adaptive Learning: Cutting-edge systems now incorporate adaptive learning techniques that allow vehicles to improve their perception capabilities as they encounter new scenarios over time, continuously refining their algorithms.

- Edge Computing: The shift towards edge computing enables real-time data processing within the vehicle, reducing the latency often caused by centralized data centers, which is critical for quick decision-making.

- LiDAR Advancements: Recent enhancements in lidar technology provide finer resolution and extended range, which significantly contributes to the vehicle’s ability to recognize and navigate complex environments.

As the realm of computer vision continues to evolve, these innovations not only promise to mitigate existing challenges but also set the stage for a new era of safe and effective autonomous driving. With a closer inspection of these technologies, the future of transportation seems replete with the potential for change and enhancement.

DISCOVER MORE: Click here to explore the impact of neural networks

Conclusion

The journey toward fully autonomous vehicles is undeniably complex, with computer vision at its core facing both formidable challenges and groundbreaking innovations. As we have explored, the technology has evolved significantly, with deep learning models and sensor fusion greatly enhancing the capabilities of these systems. The ability to process vast amounts of visual data in real-time and accurately interpret the surrounding environment has transformed how vehicles navigate and react to dynamic conditions.

Additionally, advancements in image processing algorithms and adaptive learning techniques ensure that vehicles not only operate efficiently but also improve over time, adapting to new scenarios. These innovations, combined with the shift towards edge computing, pave the way for faster, safer decision-making on the road. Enhanced testing methodologies and collaborative efforts between industry leaders also contribute significantly to improving reliability and safety.

Looking ahead, the potential of computer vision in autonomous vehicles appears immense. As technology continues to mature, regulatory frameworks will need to evolve in tandem, fostering a safe and robust environment for public adoption. Moreover, innovations in LiDAR and related technologies promise to further push boundaries, making fully autonomous driving not just a possibility but a tangible reality. For those interested in the future of transportation, following the developments in computer vision will be essential, as it holds the key to unlocking the next chapters of mobility, safety, and efficiency on our roads.